GURU

From 2017 through 2019 I collaborated with a team of researchers from multiple institutions (including Harvard, Brandeis, and SRI International) to compete for the IBM Watson AI XPRIZE, an international competition with the mission to incentivize AI technologies for social good. Our entry, GURU, envisioned the next generation of human-computer collaboration: a software agent capable of acting as full partner in a wide range of intellectually challenging and creative tasks across the arts, humanities, and sciences. I served as project lead, sharing our research and development on all annual reports submitted to the XPRIZE organization. From a starting pool of 160 teams, we progressed to the top 37 entries.

MUSICA

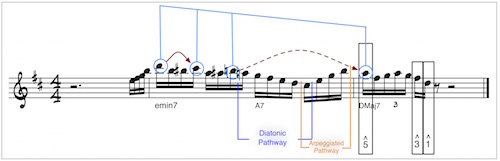

MUSical Improvising Collaborating Agent (MUSICA) (2015-2020) was a research project (Communicating with Computers, BAA-15-18) to build a system that interacted with a human performer in real time both musically and with natural language. Our research drew on techniques in machine learning, artificial intelligence, computational creativity, cognitive science, and music theory, in order to develop a system that communicates with human performers to make music that we understand to be meaningful and context-aware. For press on this project, read more here and here.

Virtual Harlem

Bryan Carter conceived Virtual Harlem as a virtual representation of 1920s Jazz Era Harlem. One of the earliest virtual reality projects developed for Humanities education, it has existed as a Second Life project, then ported to Open Sim and CAVE facilities. The Creative Computing Lab is porting existing assets from Virtual Harlem into the Unity 3D Game Engine, and using Google Earth to build a VR environment that maps accurately onto the geographic area around 125th street. The goal of migrating Virtual Harlem to an open source platform was to enable greater access to the environment as a space for immersive education and experience.

Trad & Soul

In June 2015, Jamie Aditya Graham and I spent a week rehearsing, arranging, and recording seven tracks which became the Trad & Soul EP. For several years we had been discussing the possibility of collaborating, and our discussions always came back to our shared passion for the traditional music of the U.S. - early jazz, blues, and gospel. Jamie is well-known in Indonesia and throughout Asia for his tenure as an MTV VJ, his acting, and his amazing voice - he is an R & B and Soul singer with great range and style. The amalgamation of his soulful modern voice and my arrangements, taken straight from the traditional jazz repertoire, sounds unique and, to my ear, surprisingly coherent.

The EP is currently available for digital download and streaming on all major digital distribution services, including Apple Music.